Short Bio

I am a Ph.D. student at The Chinese University of Hong Kong, under the supervision of Prof. Hong CHENG.

Previously, I received my B.S. and M.S. in Computer Science from Fudan University in 2021 and 2024, respectively, under the supervision of Prof. Yun XIONG.

My research interests are in machine learning and language technologies, including reasoning, planning, and information access in language systems. Before focusing on language technologies, I conducted research on graph learning.

Recent News

- [Aug 2025] Our paper MoLoRAG was accepted to EMNLP'2025. See you in Suzhou ✨

- [May 2025] Our paper LLMNodeBed was accepted to ICML'2025. See you in Vancouver again 😄

- [Nov 2024] I was awarded NeurIPS'2024 Top Reviewer 🥳

- [Sep 2024] Our paper GNN4TaskPlan was accepted to NeurIPS'2024. See you in Vancouver!

Research Highlights

-

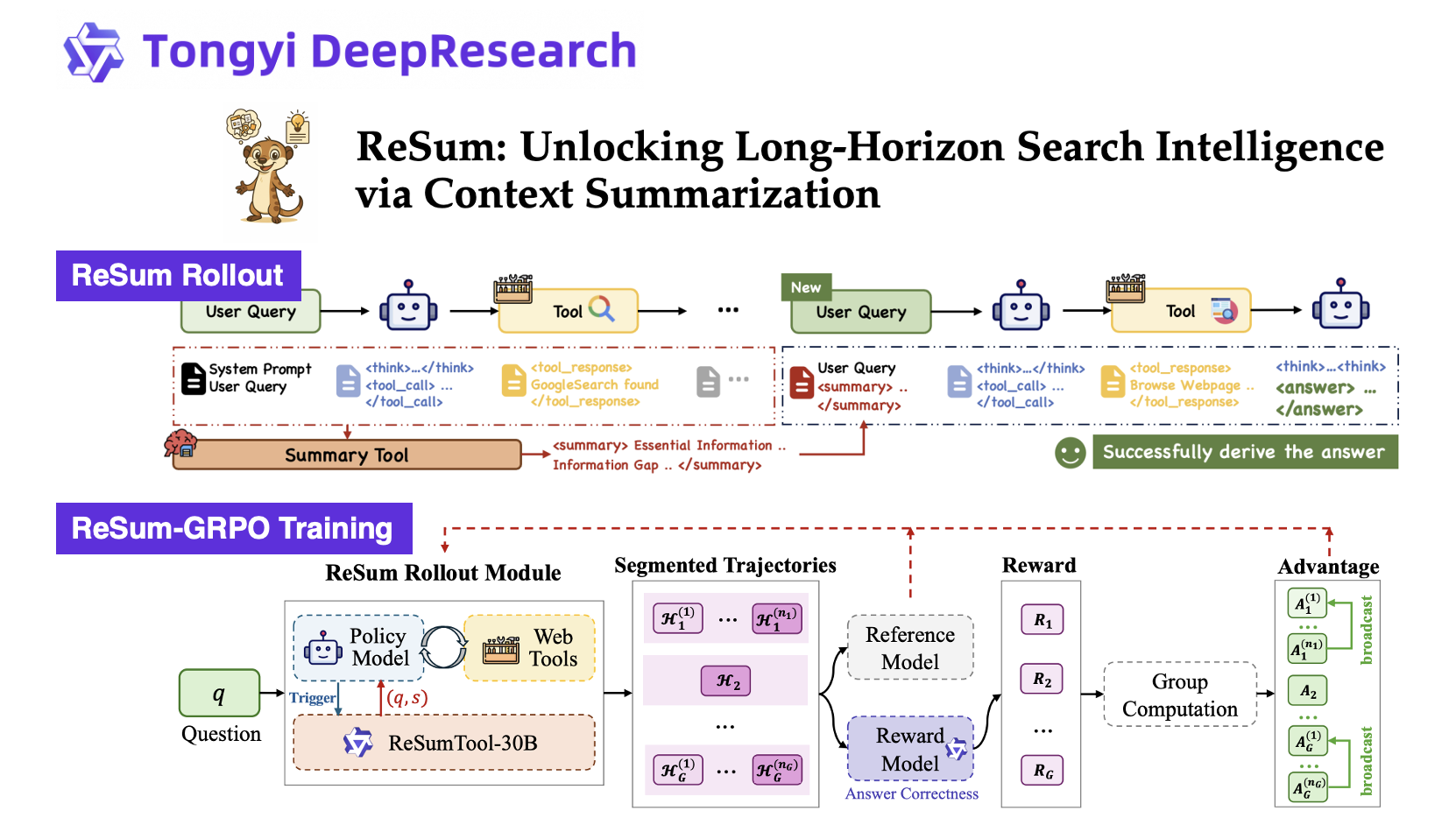

ReSum: Context Summarization for Long-Horizon Reasoning

ReSum studies the use of periodic context summarization to support longer reasoning processes in language systems. It is designed as a lightweight extension with minimal changes to existing ReAct workflows, and shows improved performance on challenging information-seeking benchmarks like BrowseComp and GAIA.

-

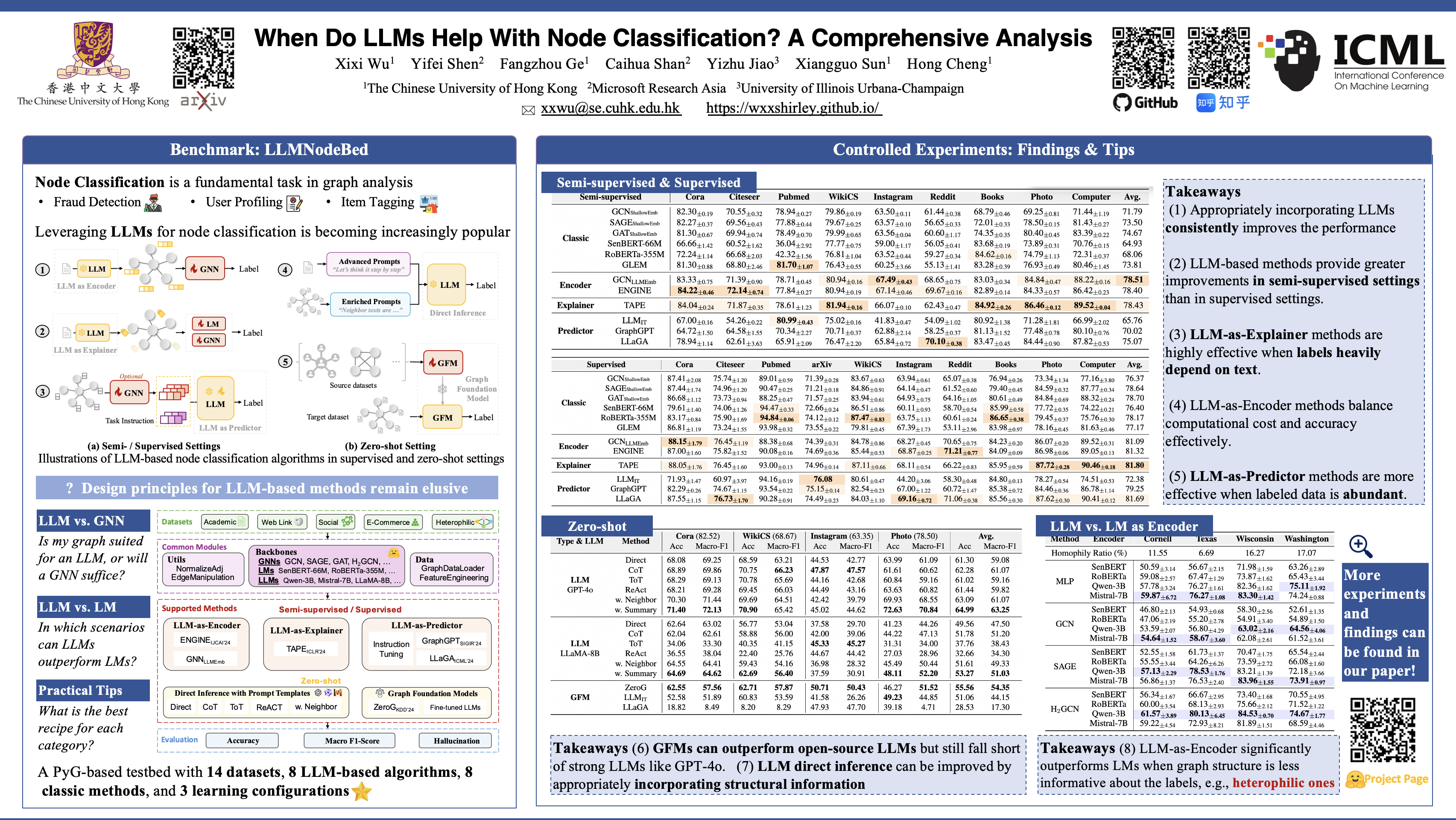

Benchmark of LLM4Graph Algorithms (ICML'2025)

We introduce LLMNodeBed, a PyG-based testbed for LLM-based node classification algorithms. It integrates 14 datasets, supports 8 LLM-based and 8 classic methods, and covers 3 learning configurations. With LLMNodeBed, we train and evaluate over 2,700 models to analyze the effects of factors like LLM type and size, prompt, learning paradigm, and dataset homophily. Our study reveals 8 key insights, including (1) optimal settings for each algorithm category, and (2) scenarios where LLMs significantly outperform LMs.

-

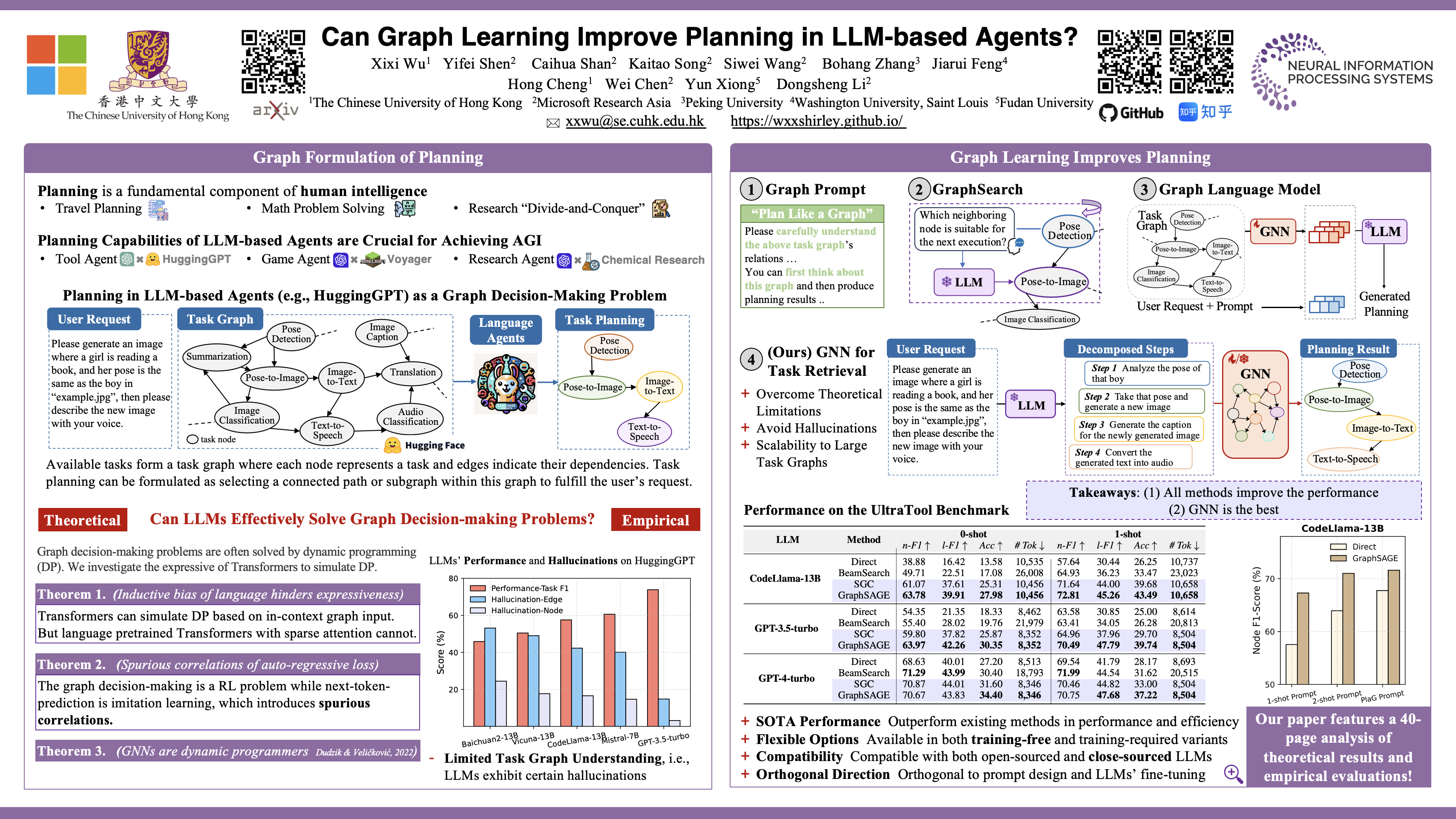

Graph Learning for Task Planning (NeurIPS'2024)

In language agents, available tasks naturally form a task graph, where nodes represent tasks and edges denote dependencies. Under such context, task planning involves selecting a path within this graph to fulfill user requests. We find that the bottleneck in LLMs' planning abilities lies in their limited understanding of the task graph. Therefore, we introduce GNNs as a simple fix, available in both training-free and training-required variants. Extensive experiments demonstrate that GNN-based methods surpass existing solutions even without training.

Selected Publications

-

ReSum: Unlocking Long-Horizon Search Intelligence via Context Summarization

Xixi Wu*, Kuan Li*, Yida Zhao, Liwen Zhang, Litu Ou, Huifeng Yin, Zhongwang Zhang, Xinmiao Yu, Dingchu Zhang, Yong Jiang, Pengjun Xie, Fei Huang, Minhao Cheng, Shuai Wang, Hong Cheng, and Jingren Zhou

arXiv preprint, 2025

-

Repurposing Synthetic Data for Fine-grained Search Agent Supervision

Yida Zhao, Kuan Li, Xixi Wu, Liwen Zhang, Dingchu Zhang, Baixuan Li, Maojia Song, Zhuo Chen, Chenxi Wang, Xinyu Wang, Kewei Tu, Pengjun Xie, Jingren Zhou, and Yong Jiang

To appear, Proceedings of the International Conference on Learning Representations (ICLR), 2026

-

MoLoRAG: Bootstrapping Document Understanding via Multi-modal Logic-aware Retrieval

Xixi Wu, Yanchao Tan, Nan Hou, Ruiyang Zhang, and Hong Cheng

Proceedings of the Conference on Empirical Methods in Natural Language Processing (EMNLP), 2025

-

When Do LLMs Help With Node Classification? A Comprehensive Analysis

Xixi Wu, Yifei Shen, Fangzhou Ge, Caihua Shan, Yizhu Jiao, Xiangguo Sun, and Hong Cheng

Proceedings of the 42nd International Conference on Machine Learning (ICML), 2025

-

Can Graph Learning Improve Planning in LLM-based Agents?

Xixi Wu*, Yifei Shen*, Caihua Shan, Kaitao Song, Siwei Wang, Bohang Zhang, Jiarui Feng, Hong Cheng, Wei Chen, Yun Xiong, and Dongsheng Li

Proceedings of the 38th Conference on Neural Information Processing Systems (NeurIPS), 2024

-

ProCom: A Few-shot Targeted Community Detection Algorithm

Xixi Wu, Kaiyu Xiong, Yun Xiong, Xiaoxin He, Yao Zhang, Yizhu Jiao, and Jiawei Zhang

Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining (KDD), 2024

-

ConsRec: Learning Consensus Behind Interactions for Group Recommendation

Xixi Wu, Yun Xiong, Yao Zhang, Yizhu Jiao, Jiawei Zhang, Yangyong Zhu, and Philip S. Yu

Proceedings of the ACM Web Conference (WWW), 2023

-

CLARE: A Semi-supervised Community Detection Algorithm

Xixi Wu, Yun Xiong, Yao Zhang, Yizhu Jiao, Caihua Shan, Yiheng Sun, Yangyong Zhu, and Philip S. Yu

Proceedings of the 28th ACM SIGKDD Conference on Knowledge Discovery and Data Mining (KDD), 2022

-

DDIPrompt: Drug-Drug Interaction Event Prediction based on Graph Prompt Learning

Yingying Wang, Yun Xiong, Xixi Wu, Xiangguo Sun, Jiawei Zhang, and Guangyong Zheng

Proceedings of the 33rd ACM International Conference on Information and Knowledge Management (CIKM), 2024

-

Towards Adaptive Neighborhood for Advancing Temporal Interaction Graph Modeling

Siwei Zhang, Xi Chen, Yun Xiong, Xixi Wu, Yao Zhang, Yongrui Fu, Yinglong Zhao, and Jiawei Zhang

Proceedings of the 30th ACM SIGKDD Conference on Knowledge Discovery and Data Mining (KDD), 2024

-

Dual Intents Graph Modeling for User-centric Group Discovery

Xixi Wu, Yun Xiong, Yao Zhang, Yizhu Jiao, and Jiawei Zhang

Proceedings of the 32nd ACM International Conference on Information and Knowledge Management (CIKM), 2023

Experience

-

Microsoft Research Asia

Research Intern, Shanghai AI/ML Group Feb. 2024 - Jun. 2024

-

Alibaba Group

Research Intern, Tongyi Lab Jun. 2025 - Oct. 2025

Selected Awards

- ACM Web Conference Student Travel Award 2023

- Second Class Scholarship for Outstanding Student, Fudan University 2018 & 2021

- Second Prize of Undergraduate Mathematical Contest in Modeling, Shanghai, China (CUMCM) 2019

- First Prize in National Olympiad in Mathematics in Provinces, Jiangsu, China 2016

Professional Services

- Conference Reviewer: NeurIPS'2024 (Top Reviewer Award🏆) - 2025, ICLR'2025 - 2026, ICML'2025 - 2026, WWW'2024 Graph Foundation Model (GFM) Workshop, SIGKDD'2024 - 2025, AAAI 2026

- Journal Reviewer: IEEE Transactions on Knowledge and Data Engineering (TKDE), Transactions on Machine Learning Research (TMLR)

Miscellaneous

- I love sports like swimming 🏊 and running 🏃. I also enjoy cooking Chinese food 🤣

- During my undergraduate studies, I was interested in mobile app development (You can find all the source codes on my GitHub):

Lose Weight, a Fluter App

Hulv, a Mini-Program

Chatroom, a Desktop App

- I enjoy exploring the unknown and strive to keep moving forward on this path of discovery and learning ✨